- Home

- Online Assessment

- A.I and Assessment

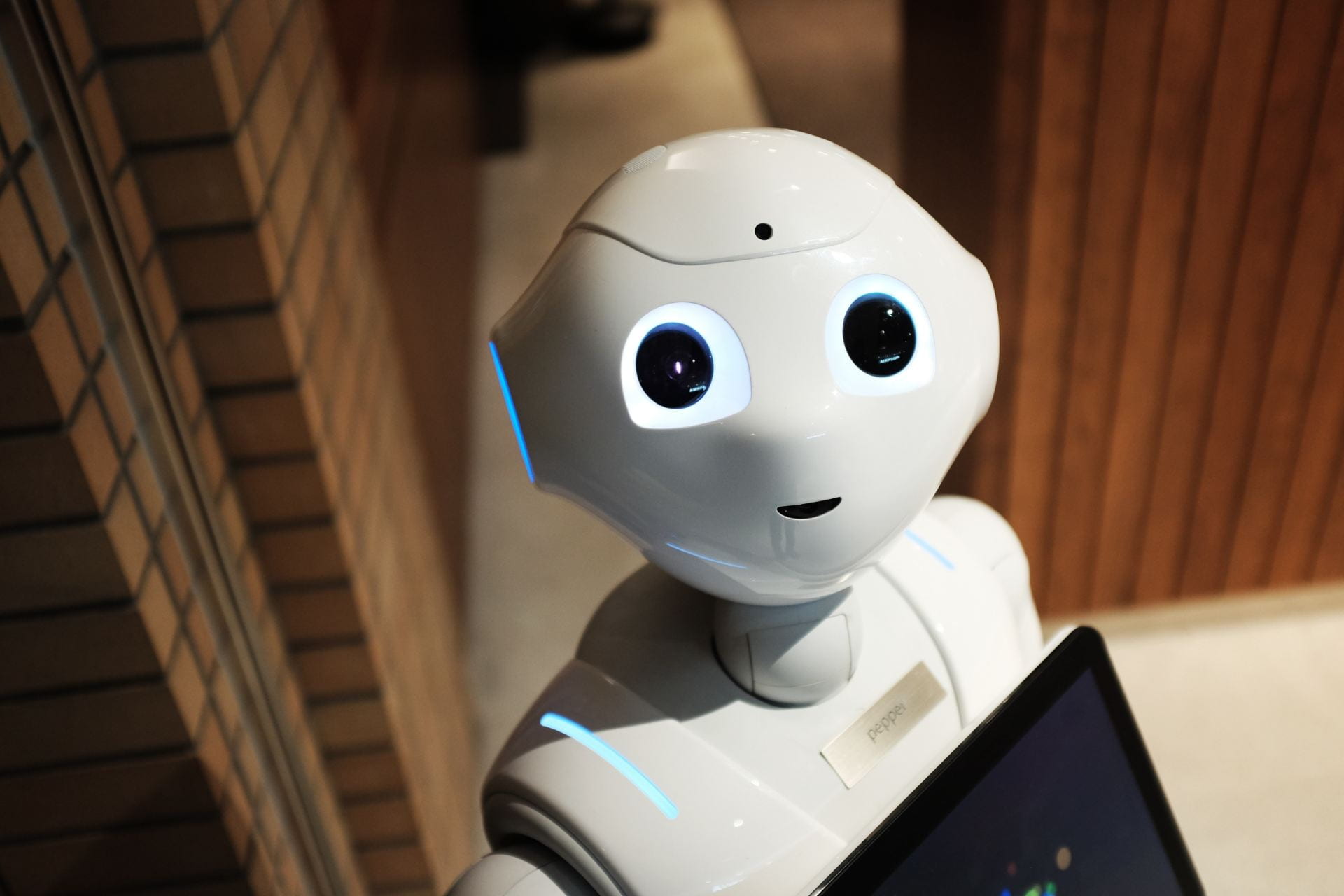

- Artificial intelligence in assessment

Artificial intelligence in assessment

The rapid acceleration of artificial intelligence (AI), or more specifically the availability of AI, has raised concern among the academic community particularly in the interest of maintaining assessment robustness.

With the ability to ask an online AI to write assignments, how can we maintain robust assessment practice?

It is vital that we develop assessments that are authentic, reliable and deter any type of academic offence as far as possible.

The new level of sophistication in Artificial Intelligence, exemplified by ChatGPT, is providing a new challenge to educators and reactions in academic and technological circles have varied wildly, from alarmist through to optimism. Many academic journals, educational institutions and other organisations have rushed to ban the use of AI until it is able to be better managed, or at least understood. Other institutions have commenced embracing its use.

Overview

What is ChatGPT? What can it do? Below is a 10min video that demonstrates how ChatGPT can be used and manipulated to produce different outputs.

It is important to understand the present capabilities and limitations of ChatGPT and other AI tools. Generally they provide overviews and summaries, lack criticality, are unable to apply to current events and situations, and cannot reflect on practices. Their referencing is generally poor, and is known to invent quotes and sources.

As Higher Education considers how this challenge will be managed, there are three simple steps we can take to mitigate the misuse of AI: Promote, prevent and detect.

Promote

We should promote an understanding of the need for academic integrity: not only for the student, but for the institution.

As such, regulations may need updating to reflect how, and the extent to which, AI is permitted to be used in assessments. It is important to remind students of the purpose of assessment. It is not simply about a grade but validation of learning, and students should be made aware of the employability and critical thinking skills they are learning along this journey.

Prevent

When writing assessments, it is important to develop prompts which challenge a student’s understanding rather than their memory.

- Create prompts which allow for self-reflection, critical analysis and connection to real-life situations.

- Consider asking for drafts, outlines and notes to be submitted as formative assessments, allowing the students’ development to be documented.

- Ask students to include references from texts used during their studies, but not freely availably on the internet.

- Multimodal assessment, including elements such as infographics and video presentations, allow students to show their understanding in other ways.

Detect

While there are already several platforms capable of detecting text likely written by an AI, these are unreliable and can already be beaten. As the AIs develop, detection will become more difficult. There are, however, a number of low-tech methods in which we can detect AI written content.

Familiarity with students’ understanding, abilities and writing styles allows us to detect submissions which may not be their own work. We can look for odd phrasing, repetition, poor referencing, superficial overviews, and a lack of critical analysis. As specialists in our fields, we should review any references which are unfamiliar or contextually out of place. And don’t negate your own intuition. If the submission just feels wrong, investigate further.

While there are certainly challenges ahead, the use of AI in Higher Education also provides fantastic opportunities for teaching, studying, assessment and grading. Using AIs as part of the assessment is another method of limiting its use by students. For example, an educator could run the assessment through an AI then provide the AI generated essay to students, asking them to critique it and comment on the failings and missing elements.

“The best ways are to create an educational environment that promotes academic integrity and encourages students to do their own work, and to create assignments that require original thinking and cannot be easily completed by a machine.

By setting clear expectations and consequences and providing resources and support to help students succeed, educators can help to create a culture of academic honesty.”

Further information

You can find additional information on writing more authentic assessment questions as the following page: Open-book assessments – Digital Education Support (lincoln.ac.uk)

Please also see the guidance from the Quality Assurance Agency (QAA) here: QAA briefs members on artificial intelligence threat to academic integrity (web).

For more information on ChatGPT, please see the following page: Educator Considerations for ChatGPT – OpenAI API